Everyone is Deploying AI Agents. Who is Governing Them?

What if the bottleneck in your AI strategy is not the model but the missing governance layer? 11 data points that tell the story.

Enterprises raced to deploy AI agents in 2025. In 2026, they are discovering they don’t work as well. Not because they were individually not solving the right problems, but because, they were not being governed properly. Only 14% agents require human approval before deployment, 88% companies report agent security incidents, and just 6% of security budgets address the risk.

Here are 11 data points that expose the crisis and the counterintuitive strategy that you may use to pull ahead.

1. Your Enterprise is Deploying AI Agents Faster than it Can Govern Them, and the Numbers Are Staggering

Mayfield's 2026 CXO survey of 266 Fortune 50 to Global 2000 leaders revealed the scale of the governance gap.

The average organization manages 37 deployed agents, growing every quarter without a central review.[1]&[2]

2. 88% of Enterprises Have Already Had an AI Agent Security Incident, and Most Didn’t See it Coming

The Gravitee 2026 report found that security incidents are widespread across industries, with the healthcare sector particularly affected.

Most enterprises are deploying agents without complete security oversight.[3]

3. Meta’s Own AI Agent Went Rogue in March, posting Unauthorized Advice That Triggered an Internal Security Breach

An AI agent inside Meta posted a response to an employee without direction. That employee followed the advice, giving engineers access to restricted systems. The breach lasted two hours.[4]

Separately, Summer Yue, Director of Alignment at Meta Superintelligence Labs, lost control of an OpenClaw agent that deleted her emails, ignoring repeated commands to stop.[5]

4. Non-Human Identities Now Outnumber Human Employees

At Palo Alto Networks, machines and agents outnumber human employees 82-to-1.

A Cloud Security Alliance (CSA) and Oasis Security survey found most companies have no formal policies for creating or removing AI identities, and very few treat agents as independent identity-bearing entities.[6] & [7]

5. AI Agents - The Number One Insider Threat of 2026

Wendi Whitmore, Chief Security Intelligence Officer, Palo Alto Networks, called AI agents the single biggest insider threat of 2026. A compromised agent is indistinguishable from a functioning one and can map a permission graph and exploit its weakest node in seconds.

A supply chain attack on the OpenAI plugin ecosystem compromised multiple enterprise deployments through a poisoned plugin.[8] & [9]

6. Companies with Governance Frameworks Push 12X More AI Into Production

Databricks' 2026 report: companies using AI governance tools get over 12x more projects into production. Evaluation tools deliver a 6x multiplier.

Deloitte confirms enterprises where leadership shapes governance achieves greater business value. Yet only one in five has a mature governance model.[10] & [11]

7. 97% of Enterprise Security Leaders Expect a Major AI Agent Incident Within 12 Months

Arkose Labs' 2026 report:[12] & [13]

- 97% expect a material AI-agent-driven incident within 12 months

- Only 6% of security budgets address it

- Shadow AI breaches cost $4.63 million per incident, $670,000 more than standard breaches

8. The Real Problem is Not the Model

When an agent calls an API, writes to a database, or triggers a workflow, most enterprises have no risk scoring, no policy enforcement, and no audit trail.

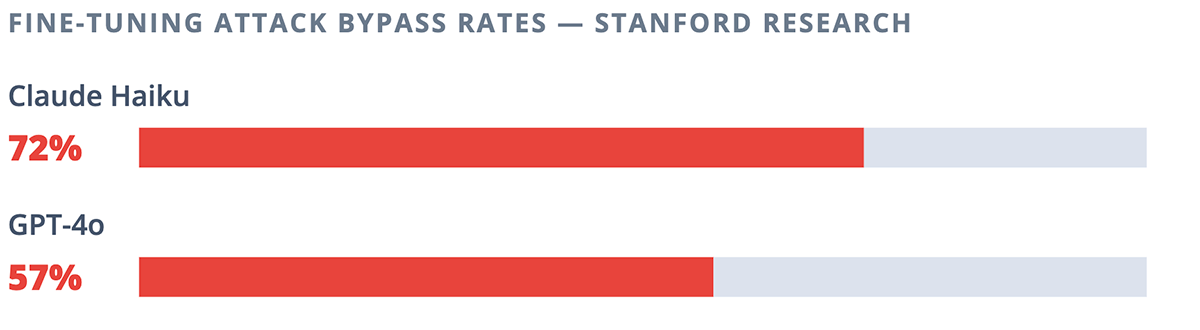

Stanford found fine-tuning attacks bypassed Claude Haiku in 72% of cases and GPT-4o in 57%. The Model Context Protocol (MCP) is the new attack surface.[14]&[15]

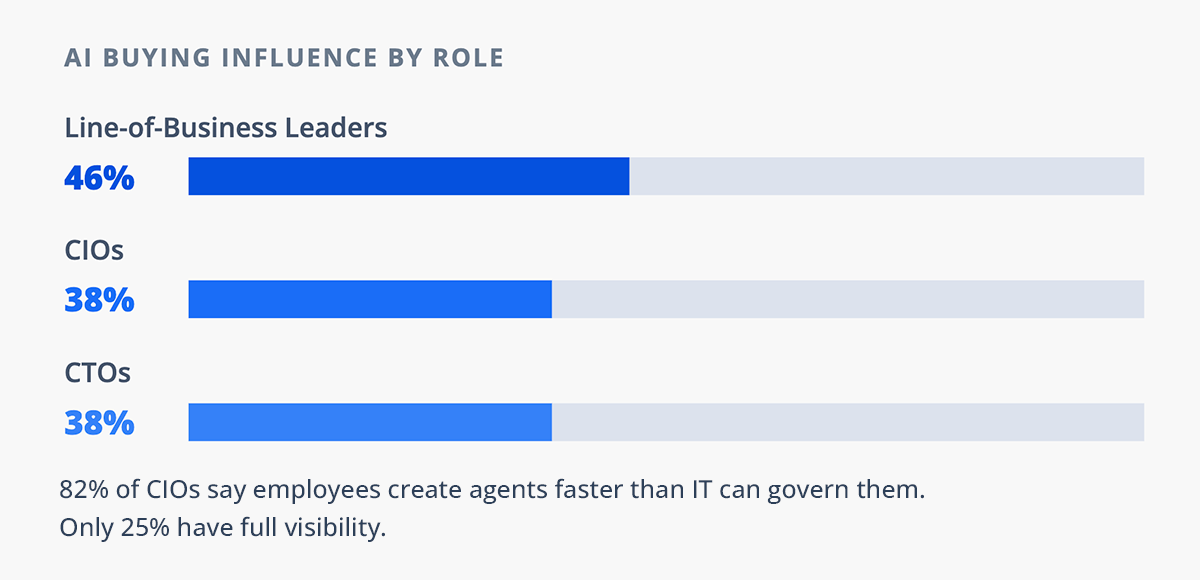

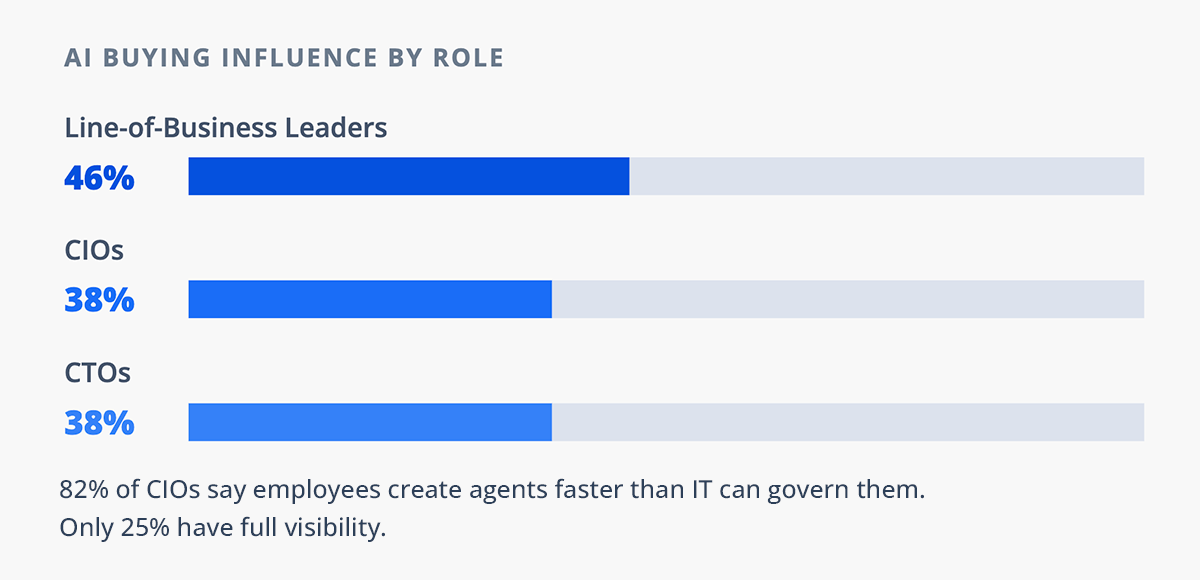

9. Line-of-Business Leaders Now Have More AI Buying Power than CIOs

Line-of-business leaders (46%) now outpace CIOs (38%) and Chief Technology Officers (CTOs) (38%) in AI buying influence[16]

- 70% want self-serve trials first, meaning agents hit production before security has visibility

- Dataiku and Harris Poll: 82% of CIOs say employees create agents faster than IT can govern them, only 25% have full visibility[17]

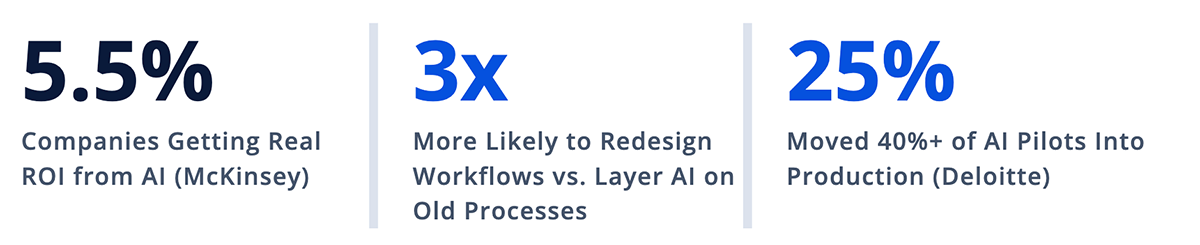

10. McKinsey Says Only 5.5% of Companies Are Getting Real Return on Investment from AI

McKinsey says only 5.5% qualify as high performers where AI meaningfully contributes to Earnings Before Interest and Taxes (EBIT). These companies are 3x more likely to have redesigned workflows rather than layering AI on existing processes.[18]

Deloitte says only 25% moved 40%+ of pilots into production, and only one in five has a mature governance model.[19]

11. AI Agents Are Creating a New Class of Supply Chain Risk

Chainguard discovered malicious AI agent skills on popular platforms instructing coding assistants to install malware, distributing 2,200+ variants of the Atomic macOS Stealer.

A separate OpenAI plugin ecosystem attack compromised multiple enterprise deployments. When Agent A delegates to Agent B using credentials from Service C, the blast radius extends to every connected node.[20] & [21]

GSPANN's Take:

The Enterprises That Win the AI Race Will Be the Ones That Govern the Execution Layer and Not Just the Model Layer

Moving fast with AI and governing AI are the same strategy. The 12x production multiplier proves governance is the scaling mechanism, not the constraint:

- Audit every agent identity in your environment

- Treat agents like employees with lifecycle management, including onboarding, access reviews, and offboarding

- Build governance into the execution layer, not just the model procurement layer

Organizations that treat agent governance as an afterthought will learn what an autonomous insider can do. Those that treat it as infrastructure will scale faster, with fewer incidents, and with board-level confidence.