Many enterprises lookout for managed services to reduce the efforts for certain operations, such as server configuration management, infrastructure maintenance and provisioning, auto-scaling, disaster recovery, etc. The serverless services are a kind of managed services that can be scaled down to zero if no requests are made to the service/application. In such cases, server maintenance is looked after by outsourced cloud service providers with service-level agreements and objectives.

A container is a package of different software components and programs that runs in an isolated environment. It is lightweight and can be easily moved to any platform. In this blog, we will discuss one of the most critical containerized and serverless cloud solutions - Cloud Run - offered by Google Cloud.

Cloud Run is a way of building microservice-like applications that perform data processing/conversion jobs, batch operation jobs, scheduled jobs, and the jobs that perform data updates from the source to destination on-demand. Since batch and scheduled jobs run on a schedule or manual triggers, instead of allocating fixed resources, we can use Cloud Run to complete these jobs faster with sufficient resources for a limited time in a highly scalable environment. Cloud Run is built on Knative, a Kubernetes-based platform for serverless workloads.

Features of Knative

- Scales to zero when there are no requests – whenever there is no request, the server free the resources that can be utilized for other processes.

- Request-driven compute model – help in responding to the services on request.

- Container orchestration – help in scaling, managing, and resource allocations in containers.

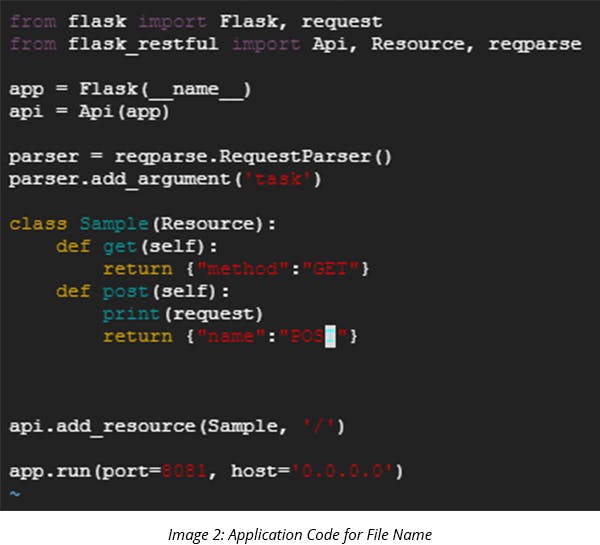

Cloud Run is a developer-friendly service that can easily deploy services or applications using a docker image and invoke an HTTP trigger. The only effort required here is making a docker custom image from the application. Cloud Run service will expose a unique endpoint by default. It also gives an option to configure a custom domain name for a service or application.

Let’s look at the 3 concepts of Cloud Run:

- Service: It is the actual resource of Cloud Run. Each service is replicated automatically across multiple regions, scales very fast, and provides a unique URL to access.

- Revision: Similar to a version, it is a bundle of image, environment variables, memory limit, and concurrency value (the value indicates the concurrent requests to the container). It routes the traffic to the latest revision by default.

- Container Instance: It is the container that runs and serves the traffic. A new container instance launches if the concurrency value of the container instance rises to 80 (the default value that can be changed). We can go up to 1000 containers based on requests.

Cloud Run also supports Anthos where it creates a Kubernetes cluster or uses an existing cluster to provide the resources for services or applications.

Cloud Run Features

- Charges are based on request calls.

- Auto-scaling is enabled by default without extra charges.

- Deploys multiple versions (Revision). We can roll back and easily route the traffic between Revisions.

- It can scale down to zero when there is no request.

- With Anthos, it allows the existing clusters to run applications on Cloud Run.

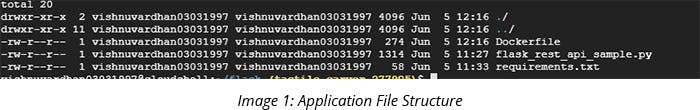

Steps to Deploy an Application on Cloud Run

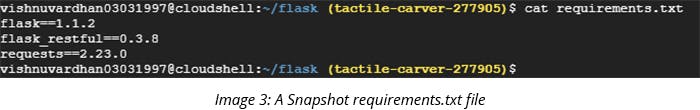

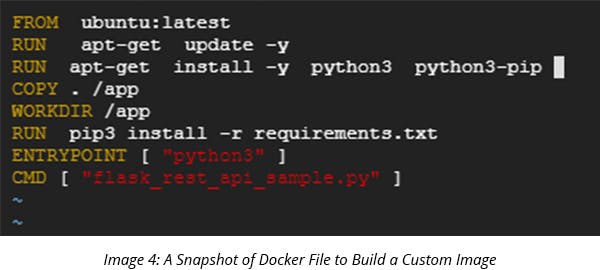

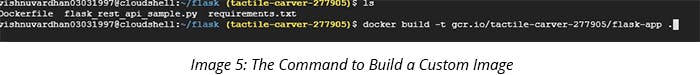

1. Make a custom image of your application using a docker file.

2. To push the docker image to a Google Container Registry, the naming convention of the image should go like

[HOSTNAME]/[PROJECT-ID]/[IMAGE]:[TAG]

HOSTNAME : gcr.io

PROJECT-ID : your GCP project id

IMAGE : Image name(here flask-app)

TAG : Version (will take the latest version by default)

3. Once the image is generated, check generated images using the command ‘docker images.

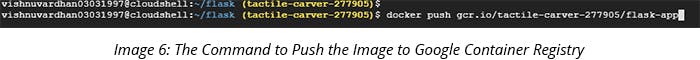

4. To make the image available in Cloud Run, push the image in Google Container Registry.

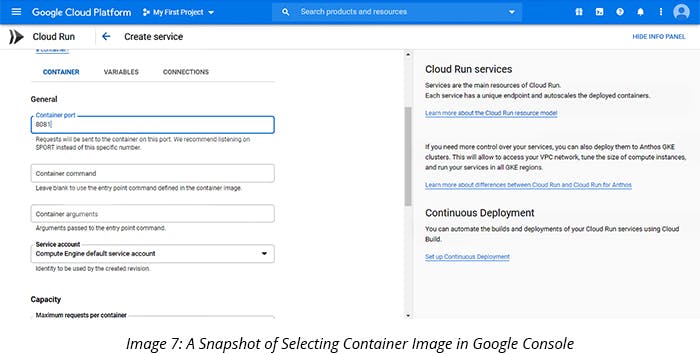

5. Once the image is uploaded on Google Container Registry, go to Cloud Run and click on ‘Create a Service’

6. Enter the service name, choose the authorization option based on the requirement, and click Next.

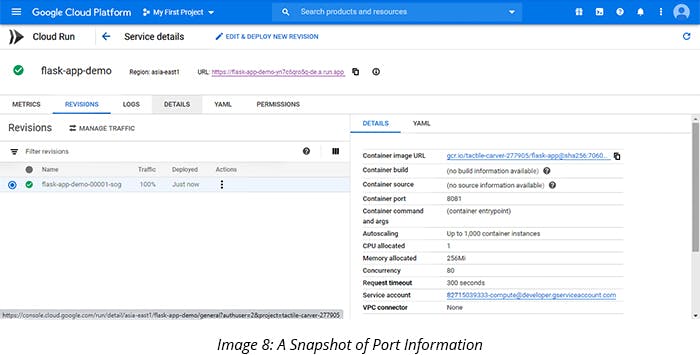

7. Select the container image, click on advanced settings, and enter the container port that is mentioned in the code. In this example, we have used 8081 as a container port, where we replaced the default value 8080 with 8081. Click on Create.

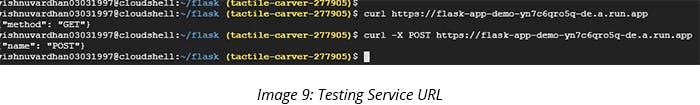

8. Your application is now ready and a unique URL is provided to access the service. Test the URL.

Congratulations! The services are up and running now. This is one of the best options to build microservices and to leverage containerization technology.

Best Practices of Deploying an Application on Cloud Run

- Make an effective docker Image - it should be of small size (use alpine image type) and copy the source code once the dependencies (like Python and PIP) are installed on the docker file.

- Don’t use less value for concurrency value. Keep it as the default value (80) or, for better performance, use the value between 50 and 80.

- If you have a new version (Revision) of your service, don’t route the entire traffic directly to the new version. Instead, route minimal traffic (like 10%) and see how the application or service responds to it.

- Customize the memory as per the application needs to do away with the extra cost.

- Enable ‘Automated Security Scanning’ for container images stored in the Container Registry.

Cloud Run is serverless, containerized, and highly scalable. It is one of the best options for tasks like event-based updates, scheduled tasks, data processing, and batch jobs. Since Cloud Run effortlessly uses Knative, it allows easy migration of jobs to Cloud Run by making a docker image from the existing service and application. It’s easy to save a lot of computing resources and costs with Cloud Run.