About the Client

A well-known American manufacturer of skincare products with $1.5B annual sales.

The Challenge

Over the years, the company developed a complex IT infrastructure to communicate sales information with company management and its partners. The infrastructure includes SAP Commerce Cloud (formerly known as Hybris), Gigya, Apache Solr search engine, and Salesforce Marketing Cloud, among other technologies.

The company’s business relies upon its sales partners, organized in a multi-level marketing arrangement. They stored most of their sales data in a Hybris database, including customer and order data, product information, and coupons,

As business volume grew, the time taken to get accurate real-time data streaming information information became unacceptably slow. The company identified several bottlenecks that primarily stemmed from its reliance on REST API request-based components.

Our Information Analytics team was called in to support the company’s decision to move to a cloud-based streaming architecture based upon Apache Kafka.

In short, the company was looking to:

- Modernize their complex on-premises infrastructure: The company wanted to transition as much data infrastructure as possible from on-premises to the cloud.

- Minimize reliance upon a REST API-based architecture: The company's legacy REST API-based architecture was part of their problem. They needed help in transitioning to a modern real-time data streaming architecture based on Kafka.

- Make accurate sales forecasts: To make accurate sales forecasts and to best assist its partners, the company needed real-time access to data.

Our Solution

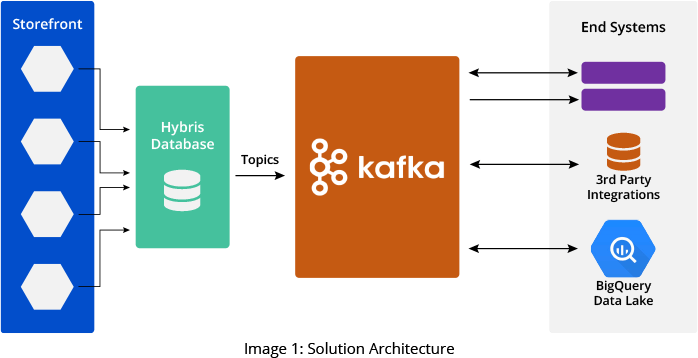

Our IA team developed a solution that sends data from the Hybris database to different third parties for reports and further processing. To achieve this, we developed a series of Kafka producers written in Java that produce data from Hybris and store them in Kafka topics. Then, we developed a set of Java-based Kafka Consumers that poll the topics and send data to various end systems.

Our IA team designed over 40 Kafka topics on Confluent Kafka brokers to manage various aspects of the business - customer enrollments, customer updates, orders, products, event registration, and many customer KPIs.

Additionally, we developed a matching set of Kafka consumers, also written in Java, to read the associated topic streams. The 40+ Kafka consumers were deployed on a Kubernetes engine in GCP. Our team configured multiple GCP pods for each consumer, providing scalability and high availability.

Failed data is stored in a catch-all Kafka topic called Dead Letter Queue (DLQ) to avoid data loss. End system consumers read the DLQs in addition to their designated topics. If necessary, the consumers are programmed to DLQ technical failure messages for automatically resyncing the data to consumer applications and other endpoints. The DLQ messages that pertain to business failures are sent back to the e-commerce applications for reprocessing.

Our team used Kafka analytics monitoring APIs - and Prometheus to pull KPIs such as consumer lags, active connections, and traffic volume into a handler application to ensure consistent performance. We also set performance thresholds and configured alerts. Our team used Splunk to assist with debugging logs.

Key takeaways from the solution include:

- Shift to a cloud-based streaming infrastructure: Our team assisted the company in moving away from a REST API-based on-premises system to a modern cloud-based real-time data streaming infrastructure.

- No data loss:The new system can easily handle a massive amount of company data with no loss. Another unique feature of our solution captures and reprocesses any failed data in the catch-all Kafka DLQ topic.

- Zero downtime: The new system features multiple redundant cloud-based virtual machines that ensure zero downtime.

Business Impact

- Real-time access to data allows rapid response: The company and its partners now have real-time access to data, allowing them to make fast and well-informed business decisions - essential in today’s fast-changing world.

- High availability increases partner satisfaction:The solution features multiple redundancies ensuring 100% uptime. With its performance threshold alerts, the new system’s monitoring allows the company to proactively address potential issues before they affect system performance.

- Rapidly shift market focus without impacting operations: Kafka makes it easier to add topics and consumers without restarting the entire system.

- Cost-effective future expansion: The cloud-based real-time data streaming solution can dynamically upscale its bandwidth, processing power, and storage space, which allows the business to grow without additional development or hardware investment.

Related Capabilities

Utilize Actionable Insights from Multiple Data Hubs to Gain More Customers and Boost Sales

Unlock the power of data insights buried deep within your diverse systems across the organization. We empower businesses to effectively collect, beautifully visualize, critically analyze, and intelligently interpret data to support organizational goals. Our team ensures good returns on the big data technology investment with effective use of the latest data and analytics tools.

Related Services

Technologies Used

- Confluent Kafka

- Prometheus

- SAP Commerce Cloud (Hybris)

- GCP BigQuery

- GCP Firestore

- SAP Gigya

- Kubernetes

- Oracle Java