About the Client

A leading global manufacturer of skincare products.

The Challenge

The company's existing SQL server had performance issues that caused database deadlocks. The performance issues also resulted in higher maintenance of server environments and impacted other scheduled jobs. They also noticed some bottlenecks from the reporting and ETL (Extract, Transform, and Load) layers. Further investigation uncovered infrastructural issues with static CPU usage and memory limitations.

The company's current infrastructure had many dependencies that limited them from achieving a real-time view of their data. For example, end users wishing to obtain a new service-level agreement (SLA) had to wait up to 15 minutes.

They wanted to migrate all their components to a cloud environment that is capable of handling massive amounts of data and performing multiple processes simultaneously.

Several challenges were keeping the company from transitioning to the cloud. For instance, data technicians encountered huge data quality issues when attempting to export data from the company's SQL server systems. Another challenge was the extreme complexity involved when trying to implement the company development team's SQL Server Integration Services (SSIS) packages in Google Cloud – the company’s cloud platform of choice. To sum it up, the company was looking for:

- Accurate data: Latency and data accuracy were significant drawbacks in the company's SQL server implementation. By removing the dependency on SQL Server, the company can move towards a platform that can enhance their data and provide rich analytics and highly accurate reports.

- Fast access: The company wanted to improve their ETL performance and improve query speeds.

- Data uniformity: Current data structures were rigid and inconsistent. The company wanted to employ a flexible data model and regain mastery of their data by standardizing its KPIs.

- Cost control: When moving to a cloud environment, the company needed to control costs. They wanted to reduce the number of bytes read by a query. Further, they were looking for a way to de-normalize data for reporting needs to fetch data in the smallest time interval possible to minimize usage costs.

- Modern analytics and reporting: The company's current SQL Server Reporting Services (SSRS) reports and their self-service and data visualization services suffered performance issues. The existing tools were also tricky to use and lacked up-to-date analytics capabilities.

Our Solution

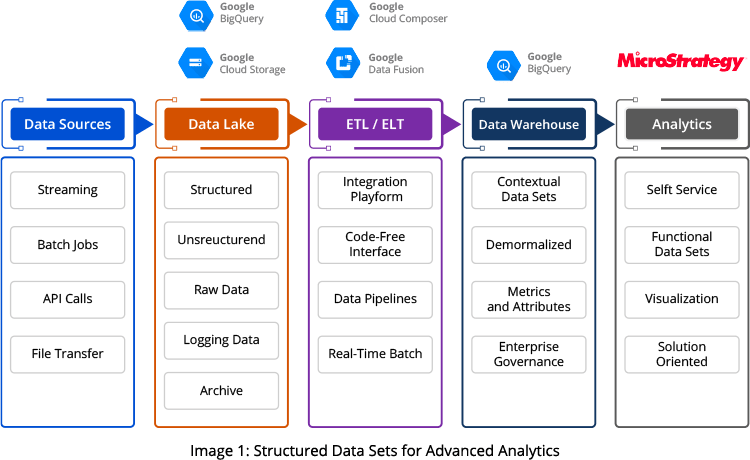

After carefully analyzing the company's goals and current infrastructure, our Information Analytics team used a combination of tools, including Google BigQuery, Google Cloud Services, Apache Airflow, and Cloud Composer, to provide a viable solution. This solution allowed the company to source, load, and transform the data extracted from a data lake into meaningful data sets suitable for advanced analytics.

The source data is first loaded into Google Cloud Storage (GCS) buckets and a BigQuery data lake from Kafka jobs. From GCS and data lake, data undergoes an ETL/ELT (extract transform load/extract load transfer) process in Google Data Fusion before being transferred into BigQuery. At this point, the correlated data is enriched and transformed in Google BigQuery and is available for the company's various analytical and reporting needs.

Key benefits of our solution:

- Captures data aligned with business goals: The new system captures and transfers data from different sources per business requirements with 85% reduced latency and 99% improved data accuracy.

- Process massive data volumes using comprehensive custom tools: Google BigQuery provides comprehensive tools designed to operate on a vast amount of data and improve query performance through its aggregate data partitioning and clustering features.

- Get near real-time actionable data: As part of the solution, our team migrated the company's API and fraud-checking jobs to BigQuery. The move reduced large file processing issues that previously occurred every week, month, and quarter. We also configured the scheduled jobs as BigQuery scripts that scan a minimal data subset at customized intervals.

Business Impact

The solution represents a major new feature for the company and provides several benefits, including:

- A deeper understanding of customers: The subject data, including account, order, and sales information, are now readily available. This gives the company deep insights into customer behavior, allowing it to precisely tailor product offerings, improving business.

- Accurate future trend prediction: The highly accurate, readily available data allows the company to generate sales forecasts and analyze trends. It will enable it to reallocate resources and respond to changing market needs quickly. This ability gives the company an advantage over its competition.

- 500% performance improvement: SSIS/SQL jobs took up to 3.5 hours to complete in the company's old system. The new solution now returns results in a maximum of 40 mins for each job. In BigQuery, the company has near real-time access to its data.

Related Capabilities

Utilize Actionable Insights from Multiple Data Hubs to Gain More Customers and Boost Sales

Unlock the power of the data insights buried deep within your diverse systems across the organization. We empower businesses to effectively collect, beautifully visualize, critically analyze, and intelligently interpret data to support organizational goals. Our team ensures good returns on the big data technology investments with the effective use of the latest data and analytics tools.

Related Services

Technologies Used

- Google BigQuery

- Google Cloud Storage

- Google Data Fusion

- Apache Airflow

- Cloud Composer